Gomocup 2017, the 18th tournament (April the 21st-23rd, 2017)

The Gomocup 2017 took place on April the 21st-23rd.

There were 5 computers available whose configurations were as follows:

- Windows 10, x64 Intel Core i7-6700K (4/4.2GHz), 8GB RAM

- Windows 10, x64 AMD Ryzen 1700X (3.4/3.8GHz), 16GB RAM

- Windows Server 2016, x64 Dual Intel Xeon E5-2666 v3 (2.9/3.3 GHz), 60GB RAM

- Windows 10, x64 Dual Intel Xeon E5-2660 v3 (2.6/3.3 GHz), 256GB RAM

- Windows 10, x64 Dual Intel Xeon E5-2660 v3 (2.6/3.3 GHz), 256GB RAM

For each game type, only cores with similar speed were used.

The openings for Gomocup 2017 were chosen by the following people (sorted alphabetically according to last names):

- Alexander Bogatirev - Gomoku player, member of Gomoku Committee RIF, member of organizers committee of Russian Gomoku Championship, winner of Russian Gomoku Cup 2016.

- Ko-Han Chen - Gomoku player, 7 dan, secretary-general of Taiwan Renju Federation, currently ranked 1st in the rating of active established Gomoku players.

- Aivo Oll - Renju player, 7 dan, former Estonian champion, European champion, and world champion.

- Zijun Shu - Gomoku player and AI researcher, contributor of several Gomoku AIs.

- Tao Tao - Renju theory researcher, who published some researches and new designs of renju openings, participated in promotion of Renju and translated several Japanese renju books.

- Rong Xiao - Gomoku expert who proposed the gomoku opening rule "swap after first move", which is one of the most popular gomoku opening rules in China.

Thank you all!

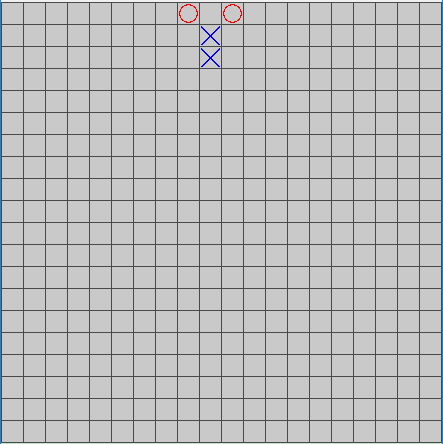

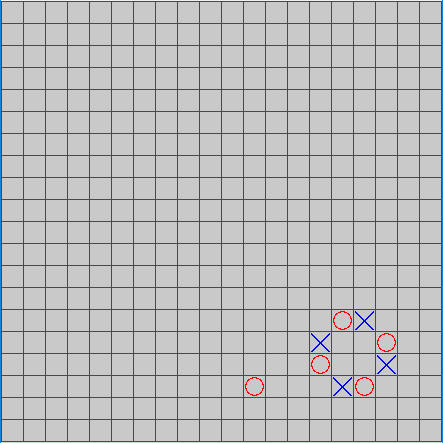

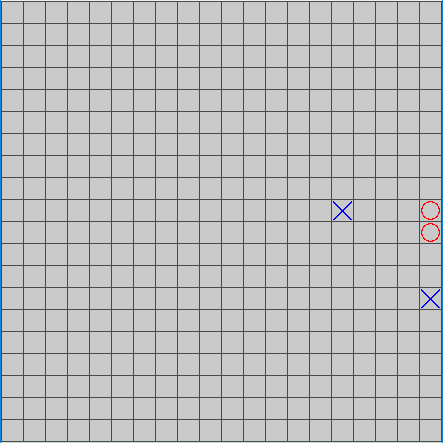

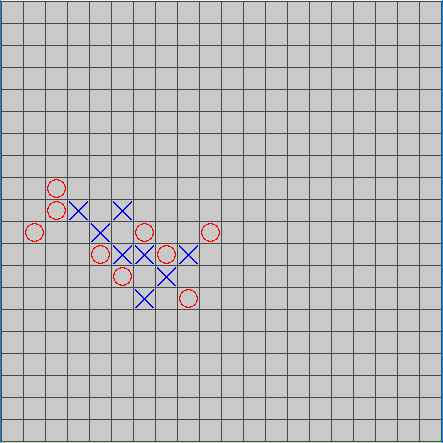

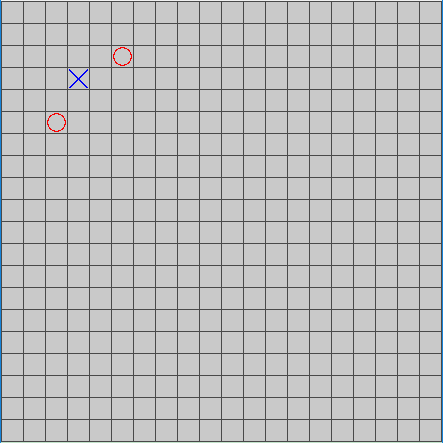

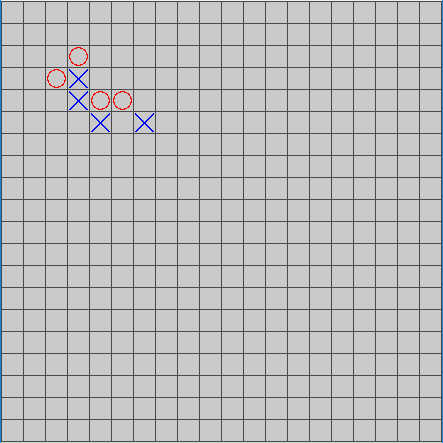

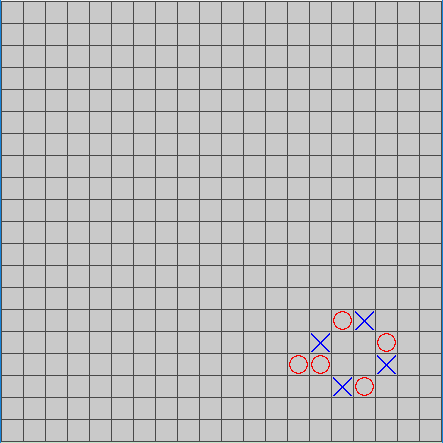

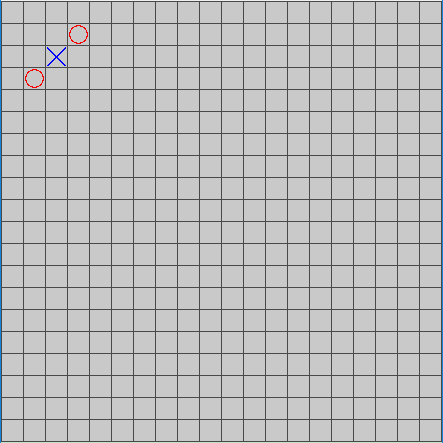

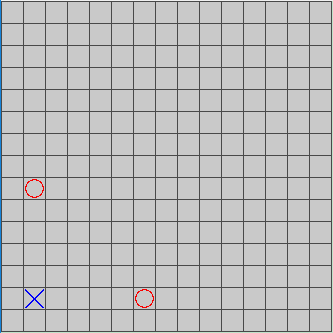

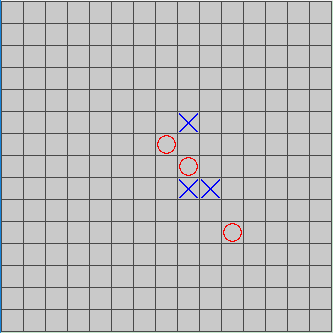

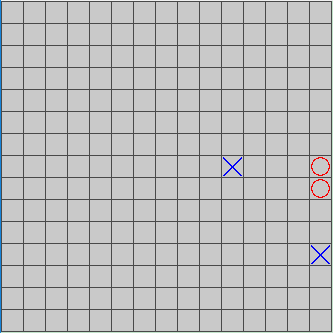

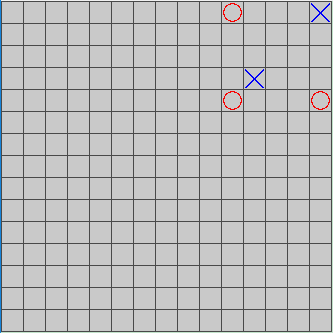

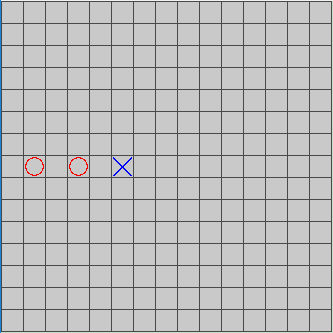

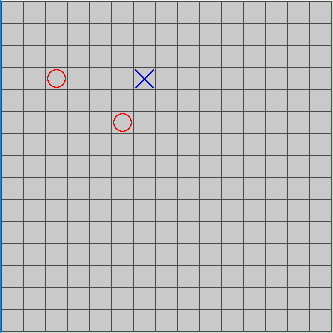

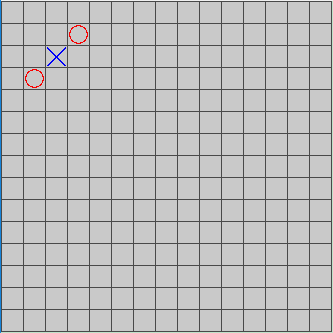

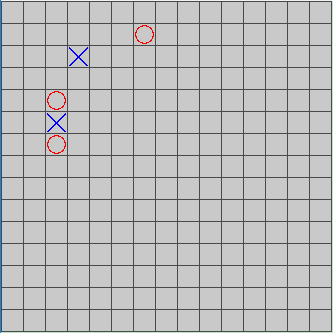

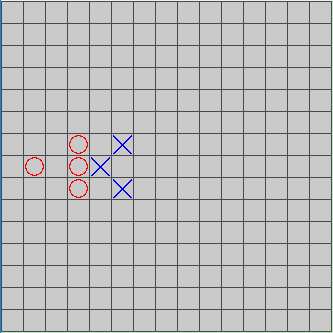

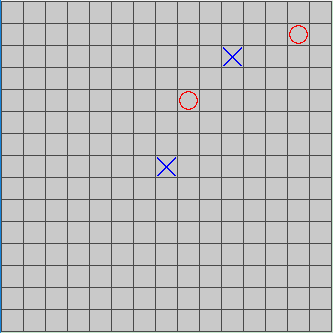

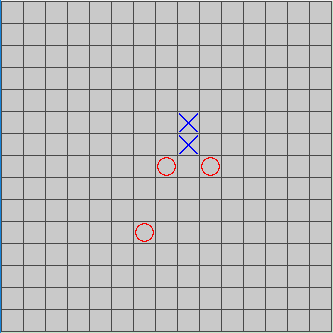

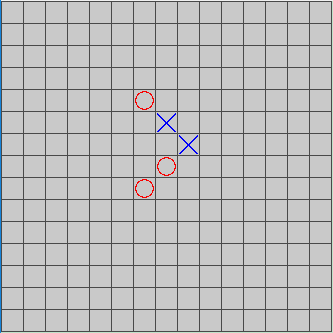

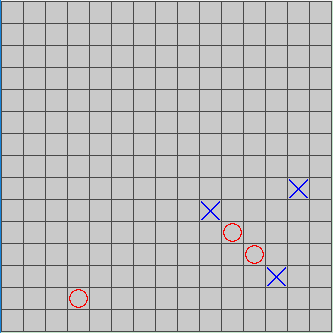

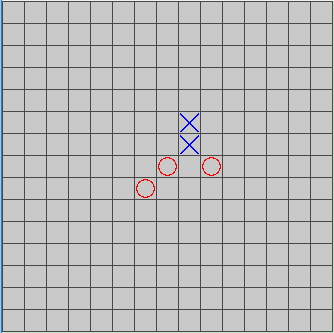

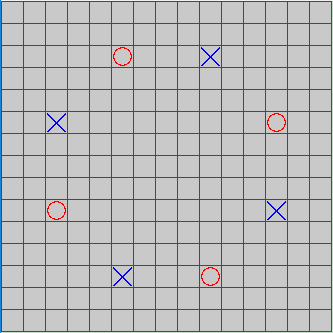

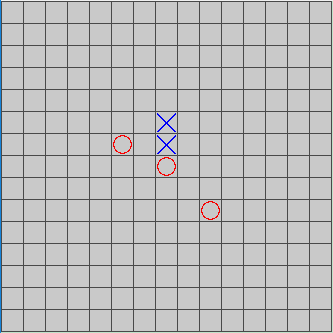

- Openings for the freestyle league:

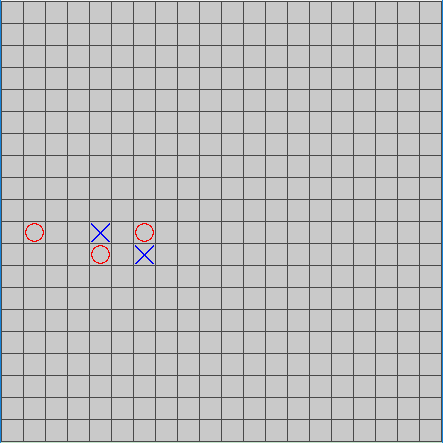

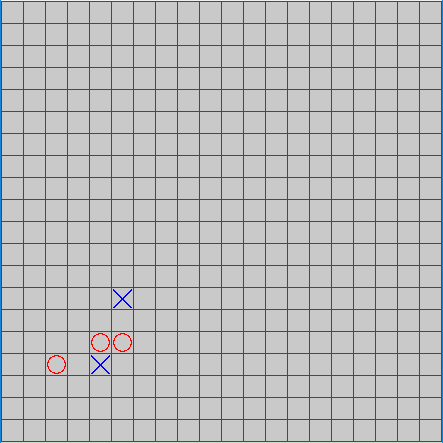

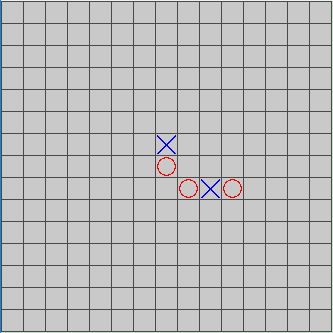

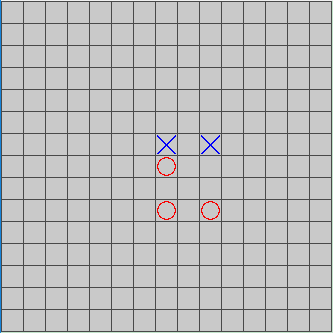

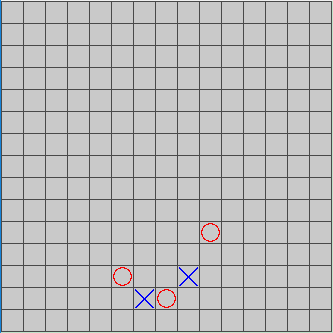

- Openings for the standard league:

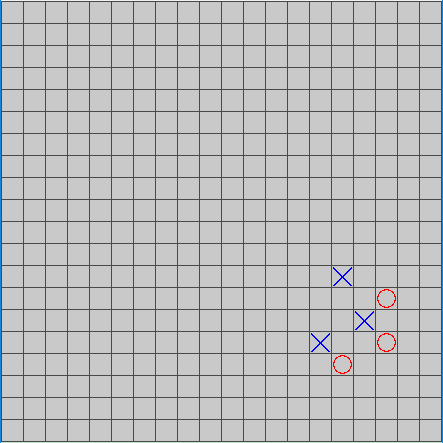

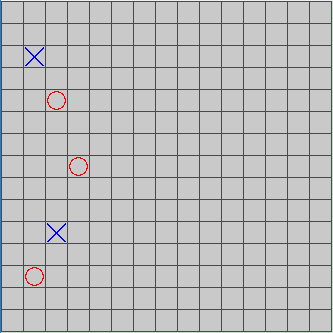

- Openings for the renju league:

Since this year we had more cores, we prepared 12 openings for every rule, which is twice as much as last year's. We believed that could help reduce the randomness of tournament results.

What is new?

- Updates

- Carbon 2017 - A bug is fixed.

- Chis 2017 - Speed up.

- Goro 2017 - Some parameters were changed.

- Pela 2017 - A bug is fixed.

- SlowRenju 2017 - A little modification on the algorithm; a bug of false-positive forbidden moves in renju rule has been fixed; faster than previous version.

- Sparkle 2017 - Slightly improved from the previous AI Ignitor.

- sWINe 2017 - Tuned a little bit; support hybrid x86-64 to speed up.

- XoXo 2017 - Completely rewritten; the heuristic function is improved; support hybrid x86-64 to speed up.

- Yixin 2017 - The algorithm has been improved; bug fixes.

- Zetor 2017 - Contains some improvements and bugfix.

- New AI

- Djall 2017 - a Gomoku program developed by Ladislav Petr. Djall supports freestyle rule.

- Embryo 2017 - a Gomoku program developed by Mira Fontan. Specifically, the AI is written in C++14, alpha-beta with search extensions, nullmove heuristics, and history heuristics. Embryo supports freestyle rule.

- GammaKu 2017 - a Gomoku program developed by Zhihao Zhou and Ziyi Dou. GammaKu supports freestyle rule.

- Whose 2017 - a Gomoku and Renju program developed by Wen Hou. Whose supports freestyle, standard, and renju rules.

- Wine 2017 - an open-source Gomoku program developed by Jinjie Wang. Wine supports freestyle rule.

- AIs Removed

- HGarden

- In the past year, we were informed that HGarden was plagiarized from Carbon Gomoku. Concretely, we were noticed that the string "AICarbon" could be found by opening the .exe file of HGarden with a text editor. Further in-depth analysis demonstrated that the logic of HGarden is quite similar to that of Carbon. Based on this information, considering that Carbon Gomoku has been an open-source AI since 2002, it's very likely HGarden is based on Carbon. However, on the one hand, the author of HGarden does not mention anything about Carbon Gomoku in HGarden, and on the other hand, the authorship requirement of Gomocup requires that one person should submit at most one AI to the tournament as the first author, and Carbon Gomoku joined Gomocup from 2016. Therefore, we think it would be inappropriate for HGarden to remain in Gomocup.

- Before making the above decision, we had already contacted the author of HGarden to ask if he thought any part of the accusation was not true. However, we did not receive any response.

- Nabamoku

- In Gomocup 2016, Nabamoku caused a problem -- we found that computers were quite stuck when running Nabamoku, even if we restrict it to run on one core by setting the CPU affinity. Because of this issue, to ensure the other AIs were not influenced, we had to restart several games last year. To avoid the same problem, we decide to remove Nabamoku from the tournaments starting from this year.

- HGarden

Same as Gomocup 2016, there were 3 freestyle groups, 1 fastgame group, 1 standard group, and 1 renju group this year. AIs were divided into different freestyle groups according to the placement in the last tournament. For freestyle 2 and 3, the top 4 AIs were moved up to the next group. If the top k (k>4) places were all taken by new (or updated) AIs in a group, then all these k AIs would advance to the next group.

Freestyle Ranking 2016

- YIXIN

- RENJUSOLVER

- SLOWRENJU

- TITO

- GORO

- HEWER

- ONIX

- SWINE

- HGARDEN

- CARBON

- GMOTOR

- PELA

- CHIS

- KANEC

- ZETOR

- JUDE

- EULRING

- PECUCHET

- XOXO

- NOESIS

- IGNITOR

- MENTAT

- NABAMOKU

- PUSKVOREC

- IMRO

- FASTGOMOKU

- PISQ

- BENJAMIN

- VALKYRIE

- STAHLFAUST

- LICHT

- FIVEROW

- CRUSHER

- PUREROCKY

- PROLOG

- MUSHROOM

In accordance with the last tournament, memory limit/time per move/per match were determined to be the same as Gomocup 2016:

| TOURNAMENT | TIME LIMIT PER MOVE [S] | TIME LIMIT PER MATCH [S] | MEMORY LIMIT [MB] | BOARD SIZE | RULE FOR WIN |

|---|---|---|---|---|---|

| Freestyle 1 league | 300 | 1000 | 350 | 20 | five or more stones |

| Freestyle 2 league | 30 | 180 | 350 | 20 | five or more stones |

| Freestyle 3 league | 30 | 180 | 350 | 20 | five or more stones |

| Fastgame | 5 | 120 | 350 | 20 | five or more stones |

| Standard | 300 | 1000 | 350 | 15 | exactly five stones |

| Renju | 300 | 1000 | 350 | 15 | renju rule |

Since the rationales of three points for a win used in previous tournaments are actually not applicable to AIs' competition, this year we switched to Elo rating system which is believed to be more suitable for Gomocup.

This year we used GomocupJudge, a new tournament system for Gomocup, to replace Piskvork which had been used in Gomocup for many years. We made the change because of the following concerns:

- A tournament usually needs to be restarted if any unexpected issue arises. In previous years, all games of a tournament including those not influenced by the issue have to be replayed. With GomocupJudge, a tournament can easily be restarted by replaying influenced games only, which saves lots of time.

- Three points for a win is replaced by Elo rating system is to evaluate AIs’ strength. Piskvork does not support Elo rating system, while GomocupJudge does.

- In Piskvork, games are played opening by opening. Sometimes this makes the tournament lack of suspense early. In GomocupJudge, however, the higher an AI's Elo is, the later its games are played.

Compared with Gomocup 2016, we had much more technical difficulties this year, most of which came from GomocupJudge.

- At the beginning of freestyle 3, GammaKu crashed very often. Since it had been tested by us before Gomocup and seemed to work well, we once thought the crashes were caused by GomocupJudge. After an investigation, however, we found that when the opening is near the edge, sometimes GammaKu produces illegal moves outside the board. In the meantime, we noticed that GomocupJudge sometimes failed to kill the process of an AI that crashed or timeout, and we managed to fix the bug.

- During freestyle 3, we were informed that the online boards were shown incorrectly with offset (-1,-1). Meanwhile, the duration of online boards was not shown correctly either. The first problem was due to different coordinate systems used by GomocupJudge (starting from (0,0)) and Gomocup Online (starting from (1,1)), and it was fixed quickly. The second problem was due to different time representation formats and was fixed shortly after the beginning of freestyle 2.

- Benjamin had been in Gomocup since 2003 with reasonably stable performance. However, it crashed frequently this year. To make sure the crashes were not due to GomocupJudge's problems, we spent some time analyzing its games. We found out that Benjamin sometimes made repeated moves on (0,0), and in previous tournaments, crashes of the similar type also occurred, though not as frequently as this year. Moreover, we tested Benjamin in Piskvork and it had a similar crash rate. So we concluded that the crashes came from bugs of Benjamin. In particular, we speculated that it was the unconventional openings this year that made the abnormal behavior happen more frequently than before.

- During fastgame, we noticed that when running tens of games simultaneously, uploading the real-time game records to Gomocup Online was very slow. To reduce server load, we reduced the refresh rate of the online board and stopped uploading offline results to the server temporarily.

- The server-client communication failed occasionally during the first half of the tournament, which led to crashes of GomocupJudge. Although the crash did not affect the results, it did slow down the progress of the tournament. After hours of debugging, we finally located and fixed the bug.

- After the standard league started, we noticed that several games were judged wrongly. The bug did not take us much time to fix, and the games influenced by the bug were replayed. A similar issue happened in the Renju league and was also quickly solved.

3 new AIs Embryo, Wine, and Whose were indisputably the top 3 AIs in this group, followed by Pisq, an elder AI in Gomocup. They advanced to freestyle 2. Benjamin and Gammaku did not have an Elo rating because of high crash rate. Note that Gammaku had over 100 crashes yet still won 151 games. It would be a strong competitor if its crash bug could be fixed.

Similar to freestyle 2, Wine, Whose and Embryo were the top 3 again, though their relative ranking was different. It was interesting that Embryo beat all of its opponents, while it did not have the highest Elo rating since it lost more games to other AIs compared to Wine and Whose. The strength of Kanec, new Zetor and new XoXo was very close and their competition for the 4th place was very keen. Finally, Zetor won the last ticket to freestyle 1 by 9 elo.

While Yixin was leading the table with a big advantage, RenjuSolver and Goro were quite close and competing for the 2nd place, and RenjuSolver ended with only 12 Elo advantage over Goro. The competition between Wine, Embryo and Whose in freestyle 1 was not as tight as that of freestyle 3/2, and their relative ranking changed again. The winner of the freestyle league was Yixin. The second place was taken by RenjuSolver, and the third by Goro.

The fastgame league was won by Yixin. The second was Goro, and the third was Hewer. Gammaku did not have an Elo rating due to the high crash rate.

There was a very interesting opening (the 2nd one) for the standard league where both sides were given an overline at the beginning. Some AIs could not well evaluate that kind of positions and thus have strange behaviors such as adding pieces to extend their overline.

Similar to 2016, Yixin was the winner of the standard league. The games between RenjuSolver, SlowRenju, Hewer, and Tito were very tight. Finally, the second was Renjusolver, and the third was SlowRenju.

It was the second year for Gomocup to have the renju league. The winner of the renju league was Yixin. Different from last year, there was uncertainty between RenjuSolver and SlowRenju until the very end. Finally, the second place was taken by RenjuSolver, and the third was SlowRenju.

You can download complete results and openings here.